Bayesian and frequentist approaches to inference or prediction are very different. How different? This simple example highlights the difference and the argument in favor of using Bayesian posterior probabilities.

Category: Bayesian Thinking

Bayesian thinking is about answering the right question – the probability of a hypothesis being true of false.

Blog 20: I Am (Probably) Wrong, Maybe

A promising treatment for Covid-19 comes from a most unusual source - an anti-depressant treatment. Is the evidence compelling? What should we believe?

Blog 19: We Won’t Get Fooled Again, Again

Many clinician researchers are attempting to "repurpose" old treatments for COVID-19. How shold we evaluate purported positive findings in a small, but rigorous, clinical trial?

No. 16: Beware Caesar – Alcohol Consumption and Alzheimer’s Disease

Published research from respectable journals and reported by renowned press outlets can be very misleading and of questionable importance. But it helps keep funding for the researchers and readership for the news media.

No. 15: Subgroups, Multiplicity and Bayes – A Case Study

Alzheimer's Disease has had many failures, and various companies have had mixed results. Bayesian approaches can bring clarity to the inference and primary question: "Does this treatment work?"

No. 13: Unconsciously Biased and Consciously Unbiased

Implicit models in the back of our minds can creep into explicit models creating biased predictions that have societal implications.

No. 12: Models – Implicit and Explicit

If we fail to acknowledge that we have biases and assumptions that influence our assessment of 'objective facts,' then we delude ourselves. Our perception of reality and how we judge evidence is colored by our beliefs which arise from our specific experiences.

No. 11: Some Beliefs in Priors

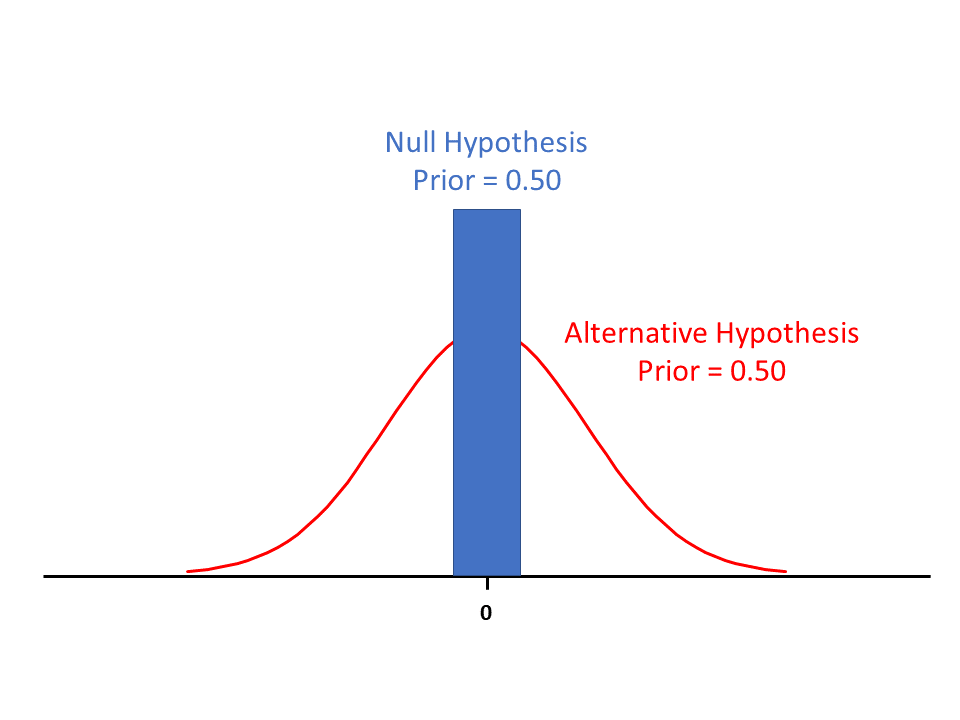

The probability that the null hypothesis is true is 0.50. How should we interpret that and then write it down mathematically?

No. 10 – Always do Subgroup IDENTIFICATION

You may have heard, “Always do subgroup analysis, but never believe them.” Don't believe this.

No. 9: Case Study – Genetic Subgroups and CV Disease

The over-reliance on p-values can lead to misinterpretation of data and a $150 million bet on a subgroup with scant evidence.